Data Ops Framework

DataOps Framework

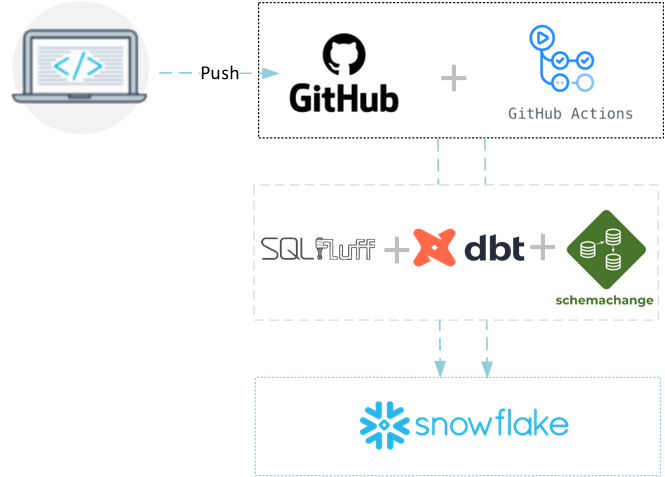

We have developed our DataOps Framework with long standing software engineering principals in mind.

The Framework is a collection of open source tools and services that we have lovingly stitched together through a mix of bespoke customisations and Pipelines.

This allows data professionals and data teams to work together or individually on features within their local development environments and continuously integrate (CI) and continuously deploy (CD) those features.

With attributes like Atomic Unit Testing, automatic generation of documentation, code standardisation (Linting) and security model adherence.

Combined with Version Control mechanisms like Rebasing and Pull Requests to seamlessly, iteratively and confidently deliver value to organisation.

Deployment now becomes a daily or hourly thing rather than a Monthly or Quarterly prayer session!

Technologies

The main tech stack components which we have lovingly stitched together are:

- Github Actions - runs the different workflows for Continuous Integration (CI) and Continuous Deployments (CD) as well as local environment management and automation of documentation.

- SQL Fluff - ensures your SQL standards are met and are implemented consistently

- dbt - enables your data team to automate the data and unit testing as well as the deployment of the data transformation processes.

- Schemachange - Is a light-weight Database Change Management project that enables what can't be done via dbt (which isn't much) to be done via python based tool to manage Snowflake objects

- Terraform - Is a Infrastructure as Code (IaC) management tool which allows for overall maintenance of non-database based objects (privileges, warehouses, integrations)

- Snowflake - Our primary Data Cloud target, we heavily utilise Snowflake's Zero Copy Clone feature along with a sprinkle of custom code to fill the gaps.

Even though we have these ready for you out of the box with minor configuration changes for your environment, we are able to work with you and adapt things to meet your needs.

For example

- you want to use Azure DevOps Pipelines instead of Github Actions,

- you want to use another data platform instead of snowflake, no problem. dbt can work with BigQuery, Databricks, Redshift and Postgres as well as Snowflake.

What does it mean for you?

Instead of having to start from scratch and wondering if you are doing the right thing, you can start with a solid foundation of knowing the open source technologies work together with training and support for you to go from zero to hero in no time.

How easy will it be to adopt?

We believe once you start using the different technologies you won't look back. What we have done is make life as simple as possible. We are able to reduce your total on boarding time, whether its adding our framework to your toolkit through to on boarding new staff. We have taken allot of time and effort to ensure the documentation is complete and easy to follow.

In order to adopt, we would clone the latest version of our framework codebase into your source code repository, and then we have a bunch of automated scripts which will:

- Apply your specific configuration to the code base

- Setup command line capabilities for dbt, snowsql, schemachange and terraform

- Setup your profiles needed for dbt, snowsql, schemachange and terraform to be run locally

In essence you could be fully up and working within hours not days.

What's level of documentation is provided?

We have fully documented each part of the system so that you are aware of how each part works from:

- Project Structures

- Best practices

- Coding Standards

- Data Governance

- Recommended Security Adoption

- Testing

- Getting things running locally

Want to know more?

We will have a series of blogs explaining the framework and how we have built it to solve real world business problems, so look out for them. You can see the current blogs at the bottom of this page.

Alternatively contact us for a demo or ask us how you can adopt the framework today.

Contact us today for a demo- Testimonials

Data Engineers have helped Mr Apple transform our data operations, introducing their framework which streamlines and enhances our data movements, pipelines and transformations. Harnessing the power of Azure, Snowflake and DBT, our data environment is gold standard.

The Data Engineers team are all highly skilled experts in their field who provide world class guidance and support to me and my team.